More than a decade ago, I moved from software development over to quality, becoming a software developer in test. I discovered that something important was missing: Although we were writing automation to test the system, when the automation ran in the lab, the resulting artifacts offered little or no information about what happened at runtime.

If a check failed, we got an error, and if it was an API test, we got an exception and stack trace, but mostly we were still in the dark. Code inspection to follow up was expensive guesswork, and it just was not enough; we usually had to reproduce problems and debug through to turn the problem into an action item.

Sometimes that action was a product bug, but more often it was just something that a quality assurance person had to do to get the automation running again. The QA team sunk many hours into trying to resolve automation failures into action items.

I tried to solve the problem with log statements in the automation code. This helped in limited scenarios, but it soon became clear that this was awkward and a poor solution to the problem.

Years later, I realized that the only good solution required a big-picture perspective on the software business. Just trying to amplify what manual test did, or what the QA role did, was not enough.

Today, QA is increasingly charged with ensuring that the entire software delivery team instills quality in their work, according to the World Quality Report 2020/21. But you have to start with the question of what automation for quality management can do for the business. I call this problem space "quality automation." Quality automation addresses all roles and functions of the business, including test, QA, developers, product owners, executives, and others, and how automation can help the whole business manage functional quality of the software system being worked on.

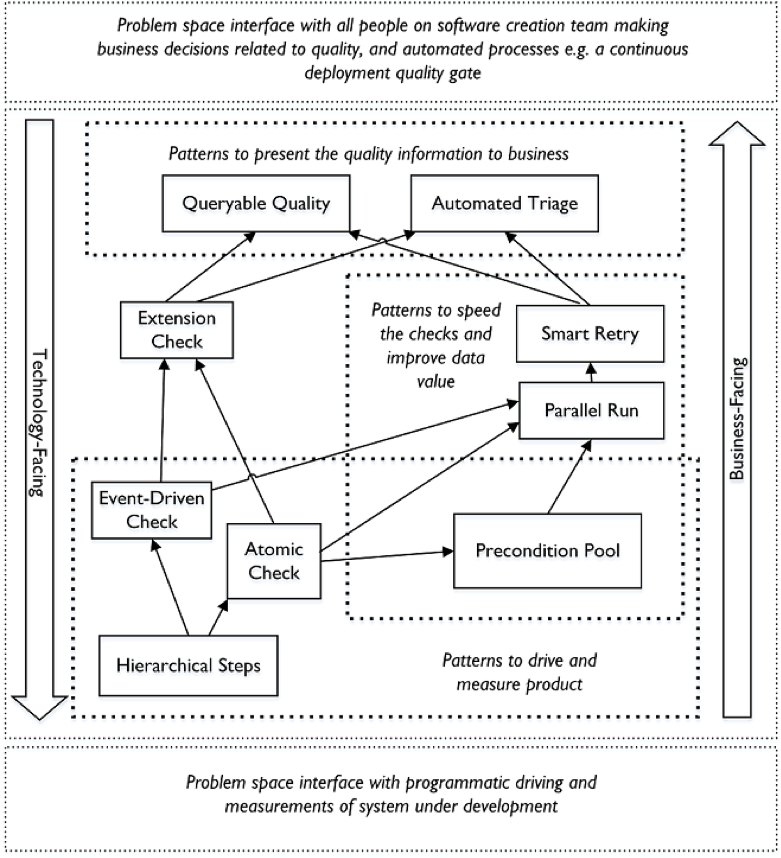

MetaAutomation is a pattern language I invented to achieve quality automation, starting with a focus on writing and shipping software faster and at higher quality than before. (I also wrote a book about it.)

When quality automation replaces "test automation," and you aim for optimal solutions—rather than ones constrained by tradition and historical accidents—you can create vastly more effective automation for measuring and managing quality.

This, in turn, supports shipping software faster, at higher quality, with better communication and collaboration, and with happier teams. Here's how it works.

Take hierarchical steps

People doing manual testing pay close attention to measure correct behavior and find issues, including sometimes using extra tools for instrumentation, data generation, screenshots, etc. The job requires it. By comparison, automation can leave us, and the broader team, mostly blind.

My solution is in the form of a pattern that we all use every day, anytime we perform or communicate any procedure that is (or could be) repeatable: hierarchical steps. This pattern represents a procedure as a rooted, ordered, multi-way tree where each step (or node) comprises all the child steps of that step.

It is also the least dependent pattern in MetaAutomation, the pattern language that I created, shown in the map below. (You can also find open-source examples of how MetaAutomation works on GitHub.)

Applying this pattern allows the automation to pay attention and record what it is doing in a powerful, flexible structure for later viewing, drill-down, and analysis. The automation isn't blind anymore, and detailed performance information is reported too.

Even with open eyes, automation is still very different from manual testing. With automation, the code has to make a distinction at some point, to decide whether the check passed or failed, and it doesn't have a human's deductive smarts or observational skills. It can run very fast, though, at all hours, and in a lab or on the cloud.

Manual testing and automation can each catch issues that the other cannot.

How to deal with bugs

Repeatable automation is essential to a fast, stable quality measure needed to keep software quality moving forward, but there's another problem: It is poor at finding bugs. Some managers in the field therefore devalue it; they believe the still-influential meme from Glenford Myers' 1979 error (found on pages 5-8 of the original edition of his book The Art of Software Testing) that "test is all about finding bugs."

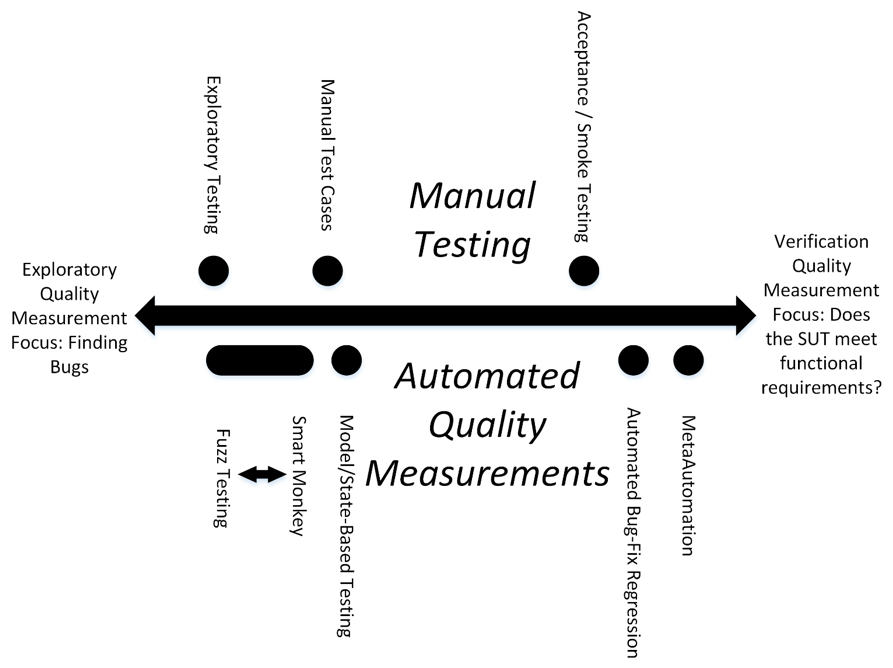

Functional quality for software under development divides into two foci: searching for bugs, and answering the question of whether the system does what we need it to do. The team must do both. Back in 1979, it was enough, practically speaking, to look for bugs, just as Myers said.

Today, though, software is vastly more complex and more important to our lives. We must verify both behavior and performance, so I made this testing-types diagram:

Figure 1. Testing types: Manual at top vs. automated down below, and finding bugs at left vs. verification at right.

Activity toward the right side supports creating and shipping software faster. Done correctly, it quickly checks to see that the highest-priority behaviors of the product have not broken with the code churn that comes from adding new features, refactoring, or fixing bugs.

Readers experienced in the field will notice that there are some types of testing, such as smart monkey, missing from this diagram—there was not room for nearly everything.

How MetaAutomation can help quality

MetaAutomation is everything that automation can help with, from driving and measuring the product to communicating quality-related knowledge to the people and automated processes of the business.

The current iteration of the pattern language MetaAutomation emphasizes speed, reliability, and transparency. There are many other ways that automation can help that do not appear in the pattern map below. For example, AI or machine learning is a complementary technique that is coming online, but it is not suited for fast and focused verifications, so the diagram below does not show it.

MetaAutomation can be used as an implementation guide to quality automation, with a focus on shipping higher-quality software faster, with radically better communication around quality.

Automation can help with the communication of quality-related information to the business. When automation pays attention to the hierarchical steps pattern, it can do some powerful things to make that communication more reliable, faster, detailed, trustworthy, and accessible.

Any team member filling any role on the team can view the automation and drill down as desired, from the business-facing steps near the root of the tree to the fine product-facing details at the leaf steps.

The pattern map below shows MetaAutomation filling the quality automation space. Considering the whole space, rather than focusing on just the system under-test part of it as "test automation" does, helps to optimize the delivery of quality management value to the business.

Figure 2. The MetaAutomation pattern language is an optimal way to approach the issue of quality automation. This diagram also shows the interfaces with the people and processes of the business at top, and the technology of driving and measuring the system under test at bottom.

Other advantages of MetaAutomation

MetaAutomation implements the quality automation space in a platform-independent, language-independent way. It emphasizes what automation can do with smart design choices to deliver quality knowledge in a way that is faster, more detailed, and more trustworthy.

The forward focus (toward the right in the testing-types diagram above) keeps the software project moving forward and makes software development faster by showing —with speed, reliability, clarity, and trustworthiness—whether the system still does what it is supposed to do. The deep and trustworthy detail of communication brings transparency to the work of individual QA people and developers.

[ Also see: 10 best practices for QA teams to deliver quality software, fast ]

With community input, I've continued to develop the MetaAutomation pattern language to create the clarity I craved around how automation can best help software quality management. To my delight, I discovered that some very powerful techniques appeared: The elimination of false positives and false negatives, a resolution for Beizer's Pesticide Paradox, and data with a structure that makes it accessible to all roles.

In the case of repeatable checks, there's clarity from a failed check on what steps are blocked. The structure of the data also enables cross-tier IoT app driving and verifications. This is implemented in Sample 3 on GitHub.

These techniques are inevitable as software quality becomes more important every year. The era of automation running blind, and bleeding business value as a result, won't last forever.

Keep learning

Take a deep dive into the state of quality with TechBeacon's Guide. Plus: Download the free World Quality Report 2022-23.

Put performance engineering into practice with these top 10 performance engineering techniques that work.

Find to tools you need with TechBeacon's Buyer's Guide for Selecting Software Test Automation Tools.

Discover best practices for reducing software defects with TechBeacon's Guide.

- Take your testing career to the next level. TechBeacon's Careers Topic Center provides expert advice to prepare you for your next move.