Every organization wants to be cyber-resilient. Most teams attack the problem from the bottom up, using a horizontal software development lifecycle (SDLC) mindset such as security requirements, threat modeling, code scanners, etc. Unfortunately, while these approaches are useful, they don't scale well.

Stakeholders don't know what to ask for around security requirements, threat modeling is inconsistent—depending on which team does the modeling—and code scanners miss 50% or more of security issues. But there's a better way.

Focus on your vertical pipeline—one that goes from security policies generated from standards and well-known industry frameworks to procedures, where your SDLC starts. Policies provide a guardrail that guides the SDLC so that you build in security.

Because the pipeline is vertical in nature, any work done in your SDLC is automatically rolled up into higher levels, giving you a near-real-time picture of the security posture of your application portfolio.

Here's how can you create a policy-to-execution pipeline in a platform-independent way.

Address the policy-to-execution gap

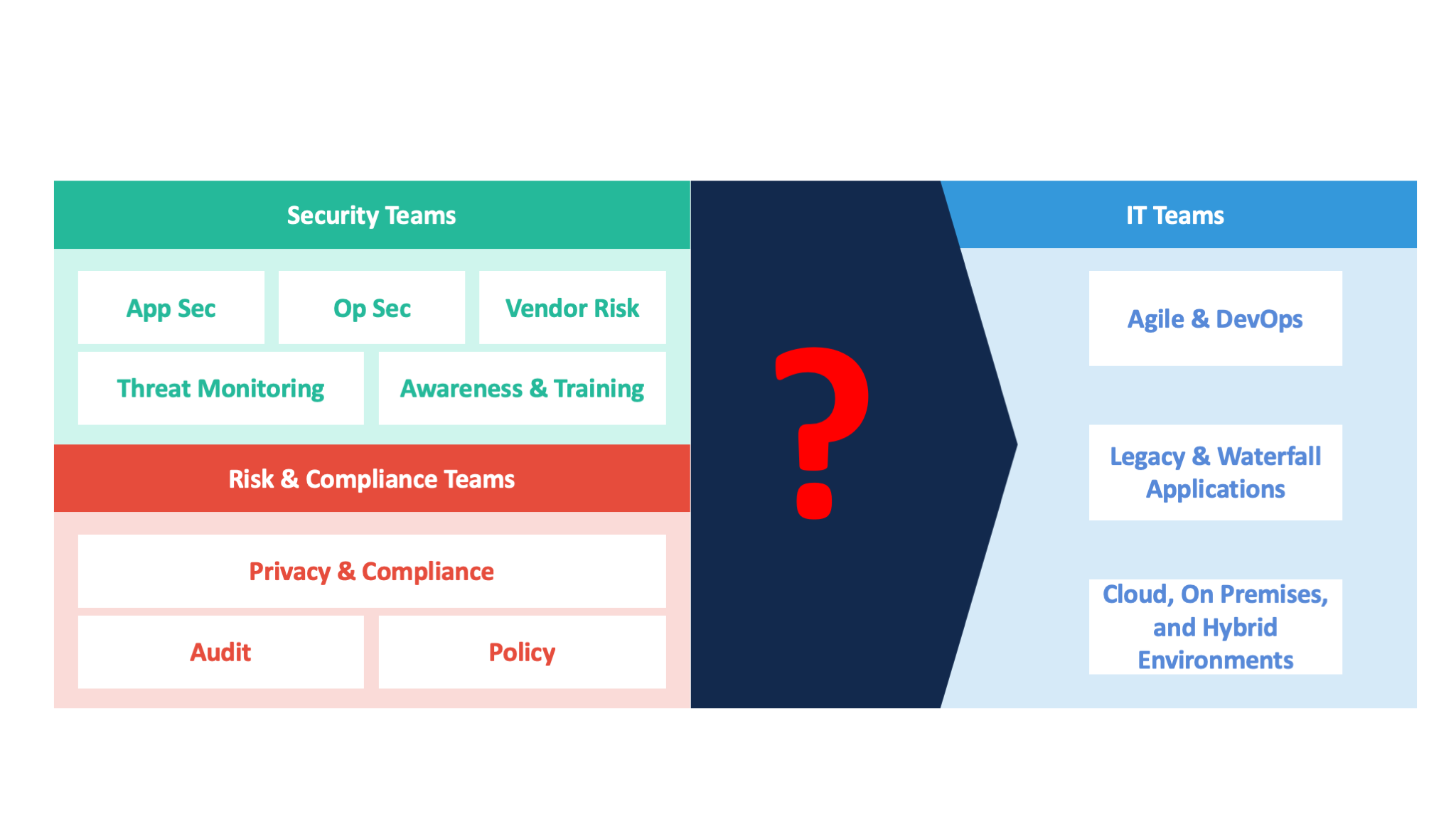

In a typical enterprise focused on security, you usually see three groups of people:

- Security teams, focused on application and operational security, vendor risk management, threat monitoring, and training

- Risk and compliance teams, focused on the compliance lifecycle dealing with privacy, auditing, and policy creation

- IT teams (including both development and operations), focused on activities related to agile, DevOps, and hybrid infrastructure management

Figure 1. A policy-to-procedure gap often exists between the security and the risk and compliance teams, which create policies, and the IT teams, which must execute them.

The security and the risk and compliance teams create policies based on standards such as ISO-27001, and they hand those policies to the IT teams, assuming that IT can execute against them.

But most IT teams don't understand how to interpret such high-level, abstract policies. They're used to dealing with procedures related to caching, or identity and access management.

The fundamental challenge, therefore, is to translate abstract, high-level policies into something that IT teams can execute. This is the policy-to-execution gap.

[ Special Coverage: SecureGuild 2019 Conference ]

Use integrated processes to achieve policy-to-execution

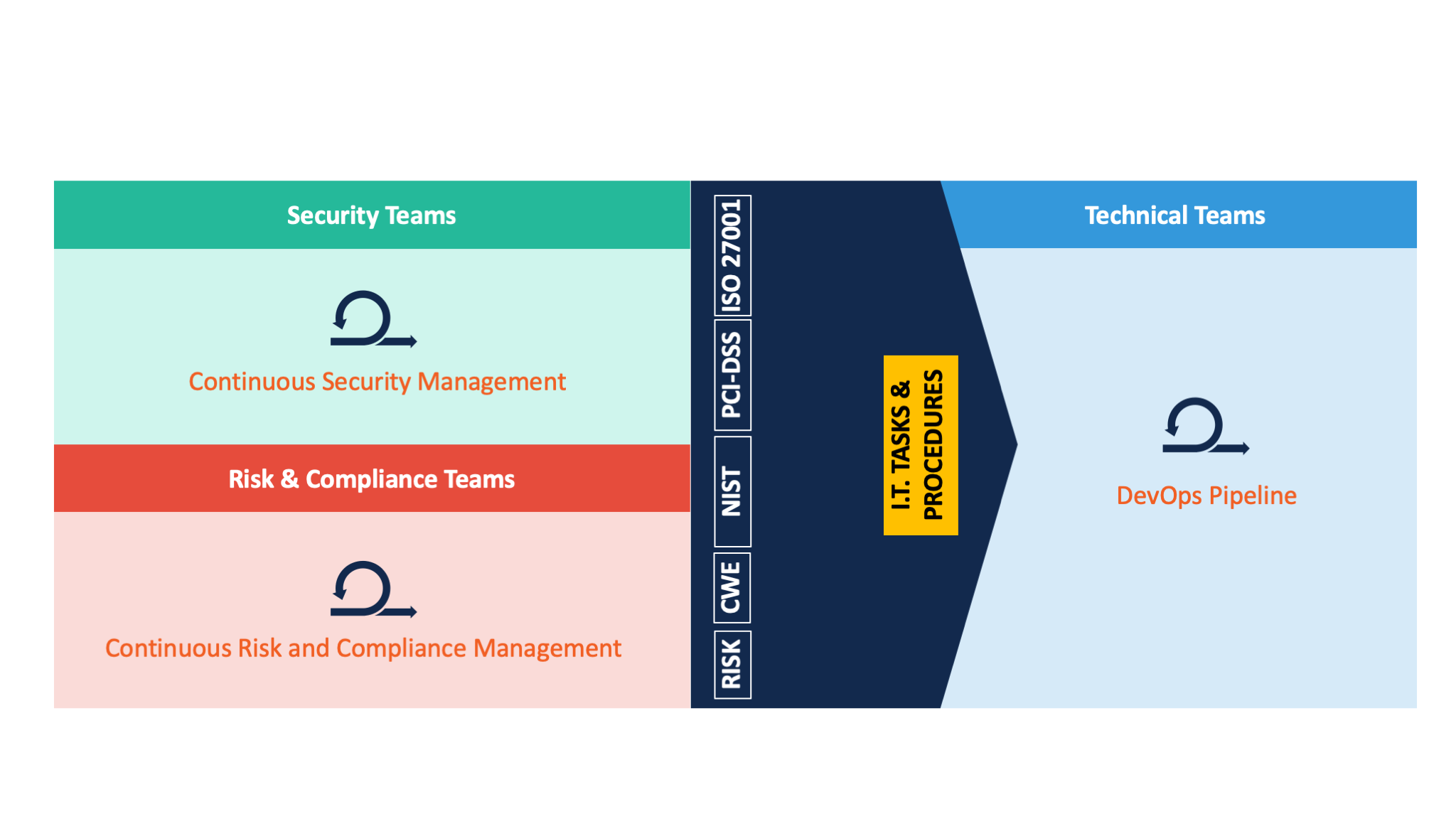

Each team has its own set of processes and workflows. Security teams have their own process focused on continuous security management. Similarly, the risk and compliance teams have a continuous risk and compliance management workflow, and technical teams have a DevOps pipeline.

Figure 2. There's often a disconnect between the processes created by the security and the risk and compliance teams (left), and the technical teams (right).

[ Also see: Why existing secure SDLC methodologies are failing ]

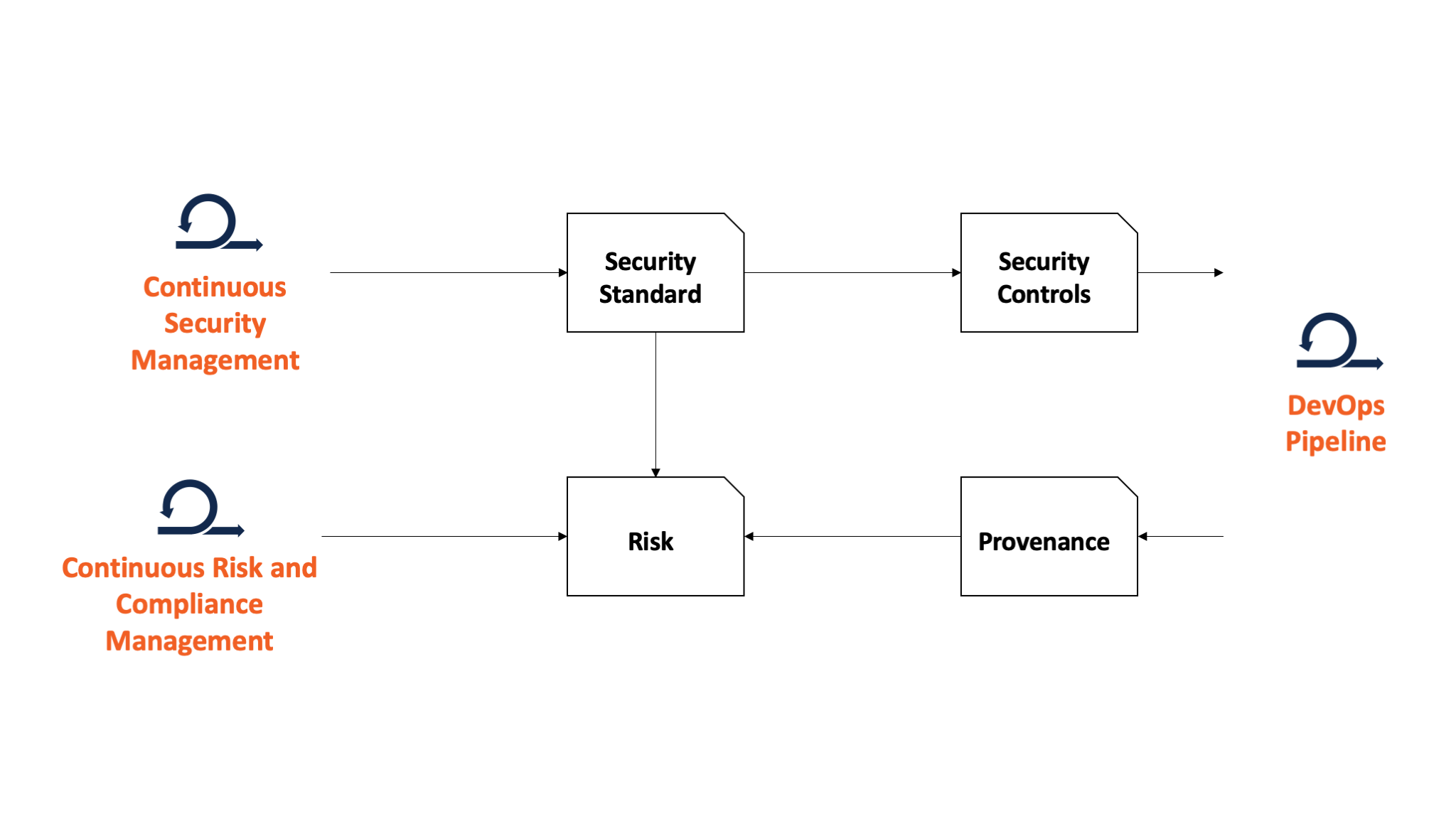

Figure 3. Bridging disparate processes among the security, the risk and compliance teams, and the technical teams requires creating common standards that are meaningful to all sides.The challenge is integrating each of these disparate processes into something that is meaningful for the business in terms of risk management. The way you achieve this is by starting from security teams that produce a security standard.

In a security standard you have several clauses. These clauses then make their way into two other workflows:

- Technical teams, where security controls are generated from clauses in the standards

- Risk and compliance teams, where these clauses are measured against risk to determine which clauses are higher priority

As the technical teams execute on their day-to-day activities, what comes out of their work is a confirmation as to whether or not the policies have been met. You can achieve this attestation by mapping your security controls to predefined application architectures. The reason for mapping to architectures, rather than individual applications, is to reduce the overhead of duplicating an accepted set of controls for each application.

Integrate the tools

It all starts with an assessment of business risk. Through that assessment you identify the security business requirements, which in turn make their way into architecture or service definitions you capture using modeling tools.

This architecture then undergoes threat-modeling activities, the results of which go into the business security requirements repository that integrates with your DevOps pipeline. In the pipeline, you integrate your application lifecycle management (ALM) tools, build tools, and static/dynamic analysis testing tools. Once a build is successful the artifact is moved into a repository that includes configuration files, code files, and scripts.

From the build repository, you execute a deployment into a test environment that uses both functional and nonfunctional testing tools to address quality. If an error is discovered, it gets pushed back to your ALM, and the DevOps pipeline kicks off again. If everything passes, the next stage of the pipeline goes back to the repository and deploys into your preproduction and production environments, where you can conduct real user testing.

But if there are any errors, it goes back for retesting in your test environment. Finally, if the failure is acknowledged in testing, you must go back and kick it off at the start of your DevOps pipeline.

Once the build is in production, your SecOps tools monitor the production environment. If any vulnerabilities are found, they are brought back again into your DevOps pipeline and security policies are modified as needed. This creates a full circle, from business risk management all the way into your DevOps pipeline, with a feedback loop for continuous improvement.

[ Also see: Why and how to implement pipeline as code with Jenkins ]

Focus on relevant metrics

Think about areas that make most sense for the business. In our research at Info-Tech Research Group, we found three:

- Resiliency: What metrics will show the business that you can recover quickly in the event of a breach?

- Risk and compliance: What metrics will show you are in compliance to a standard or regulation?

- Speed: What metrics will show that you are not impeding the business while still remaining secure?

And here are a few metrics to consider:

- Time to patch vulnerabilities

- Most changed code areas

- Deployment frequency

- Change failure rate

- Application performance

Take it one step at a time

In summary, you need to be aware of three things. First, there’s a fundamental gap between high-level policies and execution. Second, addressing this gap requires integrating security, risk, and technical workflows. Third, don't try to do this all at once. Start where you can inject security most easily and build from there.

Ultimately your goal is to build a culture where security is valued because it lowers risk for the business—and doesn't impede its needs. Just as there's a need to integrate development and operations activities via DevOps, you now need to integrate your security and risk teams.

Want to know more? Come to my SecureGuild online security testing conference talk, where I'll go into more detail on creating a vertical pipeline from a threat modeling perspective. The conference runs from May 20 to 21. Can't make it? Registrants will have full access to all of the presentations after the event.

Keep learning

The future is security as code. Find out how DevSecOps gets you there with TechBeacon's Guide. Plus: See the SANS DevSecOps survey report for key insights for practitioners.

Get up to speed fast on the state of app sec testing with TechBeacon's Guide. Plus: Get Gartner's 2021 Magic Quadrant for AST.

Get a handle on the app sec tools landscape with TechBeacon's Guide to Application Security Tools 2021.

Download the free The Forrester Wave for Static Application Security Testing. Plus: Learn how a SAST-DAST combo can boost your security in this Webinar.

Understand the five reasons why API security needs access management.

Learn how to build an app sec strategy for the next decade, and spend a day in the life of an application security developer.

Build a modern app sec foundation with TechBeacon's Guide.