How can you take advantage of application performance monitoring (APM) products and logs to define realistic load models for continuous testing?

A realistic workload model is the core of a solid performance test. Generating load that does not reflect reality will only give you unrealistic feedback about the behavior of your system. That's why analyzing the traffic and application to generate your performance strategy is the most important task for creating your performance testing methodology.

To help one of my clients build a realistic performance testing strategy, I built a program that extracts the use of its microservices in production. The objective was to present the 20% of calls that represent 80% of the production load. Through this extraction, the program guides the project in building a continuous testing methodology for the client's main microservices.

One of the biggest limitations is the lack of information stored in the http: logs or the data stored in APM products. Unfortunately, there is just too much missing information to automatically generate the load testing scripts. Technically, with a tool like my prototype, you'll have everything you need to build test scripts, test definitions, and test objectives.

From the moment you have all of your detailed http: traffic, you can fully automate the creation of performance tests based on situations you observe in production. Here's how.

The importance of a performance strategy

Load and performance testing is about simulating users, third-party systems, and batches and queries that interact with a system. Those simulations let you collect metrics to validate the ability of the system to handle specific situations, and each simulated situation should reflect a technical risk.

For example, how would your application handle the load created by users logging into the system? And can your system handle the daily activity, spikes related to the opening of a new office branch, or marketing promotion?

Performance engineers analyze the results of these simulations to identify potential bottlenecks to improve the system. So, if your model doesn't reflect reality, you'll reach the wrong conclusions and make bad recommendations.

Several years ago, when I first started working on load testing projects, we were collecting statistics shared by the business, and building models based on inputs from business stakeholders. But where were those numbers coming from? I quickly learned that you should never trust the numbers unless you know where they came from.

The most important stats

You need many statistics to build a realistic load model, including:

- Which use case (user flow) to simulate during the load test. The test needs to simulate the main use case, services, batches used in production.

- The number of concurrent users and transactions/s needed for each user flow.

- The average think time between each screen.

- The type of network constraints your users have when using the system.

- The workload model of the context that you are trying to validate.

To build this model, most performance engineers usually start by analyzing data from different sources, including:

- http: log files

- Which database the application uses, to understand the number of assets created and when they were created

- Tracking systems such as Google Analytics

Due to time and cost constraints, many projects force performance testers to run tests based on a workload analysis performed several months or even years ago. But you need to ask whether current production usage is still consistent with the old performance model.

In this situation you should try to automate this process to continuously review your performance strategy.

Test it earlier: Component testing

In an agile and DevOps environment, most test engineers implement continuous testing by testing the main components of their applications earlier. Their objective is to quickly detect performance regression during development.

Should you do continuous performance testing on all of your components, or should you be smarter, selecting only those components that are most important for your architecture?

As with the challenge of building a realistic simulation, you need to ask how you can select which components need testing and what the throughput should be to apply on these components.

All of these questions should be answered the moment you have a good understanding of your production usage.

[ See also: Shift your testing: How to increase quality, not anxiety ]

Reuse your monitoring tools

The proper performance testing strategy relies on correctly monitoring your users and the correct use of your system. If you don't know how your users interact with your application, you can only continue to guess as to what type of features need enhancement.

APM products can help provide visibility into how your users are interacting with the application, and help you be more proactive.

That's why I built a prototype testing tool—to help my clients apply the right performance testing strategy. The tool defines what needs to be tested for continuous testing and end-to-end testing.

A solution that could define your strategy

The tool I built was supposed to primarily use the APM system the client used in production. Because of a missing module, however, I had to use the APM tool to build the strategy for components testing and use http: to access log files for end-to-end testing.

APM products can report the usage of all the services an application consumes while users are interacting with it. Because of that, we could extract current usage information about the services in the architecture.

The main constraint to using APM products is aggregation. An APM tool's data is very precise during the first hours an application is in production. The tools start out by collecting lots of live data, but after 24 hours they start calculating averages.

After a day, the APM tool starts aggregating the data to reduce the volume of data it must store. Because it now calculates averages, you can't examine an event that happened a month ago, since you won't have enough data points available to diagnose your issue.

So, the further out from the production time for a production issue you've encountered, the less data you will be able to get from your APM system. If you need to build a precise workload model that reflects actual usage, you must extract the activity generated on the service level and store it in a database such as MongoDB.

[ See also: 5 effective and powerful ways to test like tech giants ]

Extract the traffic from the APM by using APIs

To extract the traffic from my customer's APM system, I used the vendor's API, which offers lots of functions to extract time series data. The query I used to extract the traffic was:

SELECT count(name) AS ''hit/hour'', average(databaseCallCount) AS ''Databasecalls''" ",average(externalCallCount) AS ''Externallcalls'' FROM Transaction where transactionType=''Web'' "FACET name,`request.headers.host` LIMIT 200 since {0} until {1} TIMESERIES 5 minute"

This query will return the list of calls every five minutes on each host. In the above example, the {0} and {1} are the starting and ending timestamps, respectively.

To save storage space in my prototype tool, I had to aggregate the extracted traffic. To do this, I calculated the ratio of usage of the service on a given host, hour by hour, but kept only the services that represented more than 60% of the load.

To collect two days of production usage with the help of the API, the process iterated by extracting one hour of production traffic between several time frames ( between 1 AM and 2 AM, 2 AM and 3 AM, 3 AM and 4 AM, and so on).

Once the extraction process completes, the prototype solution generates a csv file, presenting only the services that represent 80% of the load on each host. The file presents the use of services hour by hour. The idea is to generate a workload model for each targeted service.

From here I could automatically identify the services that needed testing and the objectives in terms of hits per second.

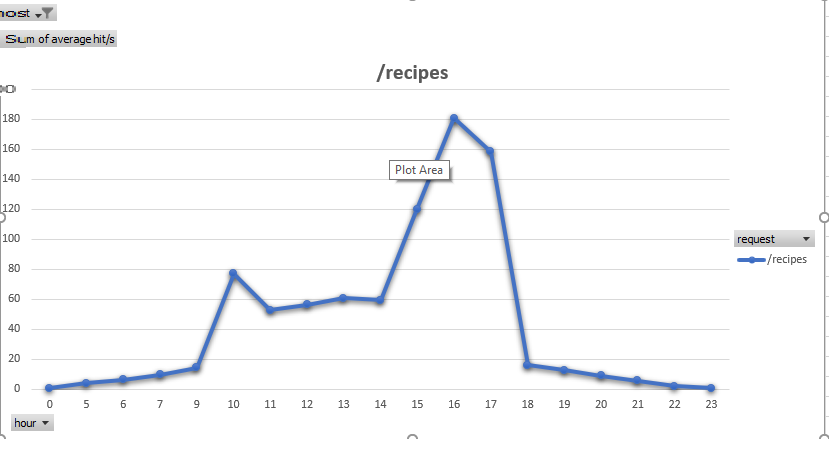

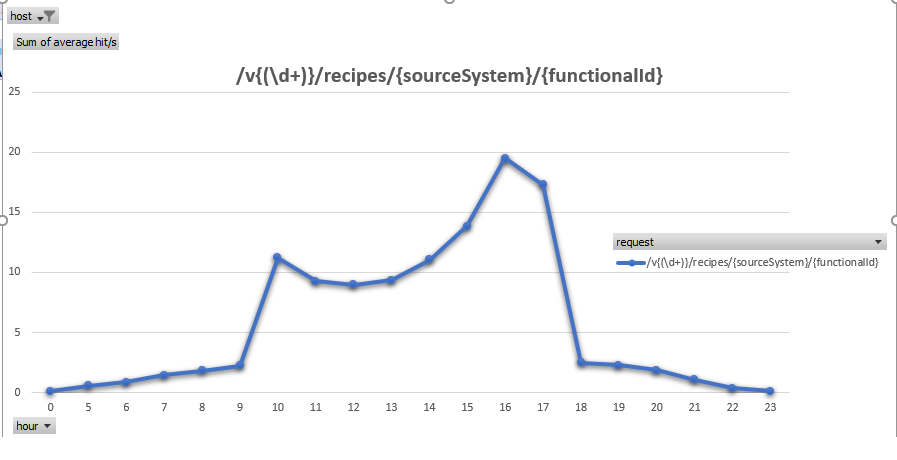

Figure 1. This is the result after extracting traffic from an APM tool using the vendor's API. The generated file has all the most heavily used services of the production environment.

Figure 2. In this example, the graph is filtered by hostname. Filtering by host lets you see the most important APIs per host.

Unfortunately, in this case I was unable to generate the load test scripts because of the missing data. Most of APM tools don't store the payload of a HTTP POST request or the parameters used in HTTP GET requests.

This prototype solution helps you to set up a continuous testing approach, but still requires human intervention to finalize the scripting to each of the APIs.

Extracting user flows from HTTP access log files

This approach could also be used to rebuild your end-to-end testing strategy by either extracting the traffic from the APM tool, or getting data directly from http: access log files.

Of course, working on http: access log files is more complex, since it requires you to have the following information within your access log files:

- Http: method

- URL

- IP sources

- Referrer

- User agent

- Http: session

- Date and time of the request

This information is required to reconstruct the user flow and to group http:-generated requests into the user flow.

Working from logs is possible, but it requires more time to extract and aggregate the information, especially when you consider that one production access log file will represent 1.5GB to 2GB of data. To reduce the analysis time you must have the prototype solution running every day to continuously store the aggregated traffic.

Performance engineers can then extract the workload model from the aggregated database using the starting and end-date filters.

Align performance testing with actual product use

To have a realistic performance testing strategy that covers both continuous test (components testing) and end-to-end testing, your test and workload model must align with production usage.

Most organizations are deploying a monitoring system in production to collect technical metrics, marketing data from a tracking system (such as Google Analytics), and an APM tool to precisely understand an application's use in production. Fortunately for performance engineers, most of those tools have APIs you can use to extract production usage and store it to continuously build the scope of your performance tests.

To learn more, drop in on my talk at PerfGuild, the online performance testing conference, on April 8-9. Can't make it to the live talk? Registered participants can watch video recordings of all talks, including the Q&A sessions that follow, after the event.

Keep learning

Take a deep dive into the state of quality with TechBeacon's Guide. Plus: Download the free World Quality Report 2022-23.

Put performance engineering into practice with these top 10 performance engineering techniques that work.

Find to tools you need with TechBeacon's Buyer's Guide for Selecting Software Test Automation Tools.

Discover best practices for reducing software defects with TechBeacon's Guide.

- Take your testing career to the next level. TechBeacon's Careers Topic Center provides expert advice to prepare you for your next move.